I wanted to clarify (in my own mind) what it means to be “woke” or “anti-woke,” and how censorship relates to both concepts.

While it’s hard to agree on what these words describe, many are convinced that they’re either one or the other.

First, I wrote about 500 words.

Then, I downloaded ChatGPT, to see where the app took me.

To my surprise, ChatGPT was useful.

The ChatGPT text was as dry and colorless as expected.

Moreover, the essays were full of platitudes, and contained falsehoods.

However, ChatGPT did offer some ideas that I hadn’t thought of.

When I asked ChatGPT to define “woke,” I received an essay saying (in part) that:

To be woke signifies a deep commitment to social consciousness and active engagement with systemic injustices. . . and a willingness to challenge the status quo.

The significance of being woke lies in its potential to bring about meaningful change, fostering a more inclusive, equitable, and just society for all.

When I asked ChatGPT for a definition of “anti-woke,” I received an essay saying that:

Being anti-woke represents a rejection of what critics perceive as the dogmatic and stifling aspects of the woke ideology.

It emphasizes individual responsibility, intellectual diversity, and the importance of free thought and expression.

While acknowledging the existence of systemic injustices, the anti-woke movement encourages a more nuanced and balanced approach to social issues . . . and open dialogue.

When I asked whether censorship was an issue on the political right or left, I received an essay saying that:

Censorship is not inherently a political right or left issue, but rather a complex and multifaceted dilemma that intersects with various ideologies.

While the political right may often advocate for censorship to protect societal values, the political left may prioritize censorship as a tool to combat discrimination and promote inclusivity.

The ChatGPT response to my request for an essay on “culture wars” was mere gobbledygook.

It ended with the sentence: “Only through a commitment to mutual respect and a willingness to confront these issues head-on can the United States move towards healing and reconciliation.”

Well, that’s completely useless!

I would disagree with the ChatGPT definition of “woke” in several ways.

“To be woke” doesn’t always mean “a commitment to changing the status quo.”

Often, it just means being a bit more open to societal change.

“Woke” people are usually more open to erasing words like “master bedroom” from their vocabularies, using personal pronouns in their email signatures, and being more aware of microaggressions.

Often, it only means that the “woke” are more willing to face uncomfortable information, and learn from history.

I would also argue with the ChatGPT definition of “anti-woke.”

While “being woke” is perceived by the anti-woke as dogmatic, it’s difficult to figure out which beliefs are actually in contention.

It’s as if the perceived attitudes of self-satisfaction in the woke, are more distressing than their actual ideas.

“Collective guilt” and “cancel culture” came up in the ChatGPT essay, but I’m sure that only a small percentage of “the woke” feel guilt.

Further, the woke are more likely to cancel people on their side, than the anti-woke.

(Think of comedian Kathy Griffin and former Senator Al Franken.)

I also wonder what percentage of the anti-woke “acknowledge the existence of systemic injustices,” or desire an “open dialogue” (as suggested by ChatGPT).

Overall, being anti-woke may only mean that you are unhappy with the speed of, or existence of, societal change, or that you find “woke” people annoying self-righteous.

I was very happy with the ChatGPT response on censorship.

Saying that the political right wants to “protect societal values,” while the political left wants to “combat discrimination and promote inclusivity” just about sums it up.

However, everyone has their own thoughts about what our societal values should be, which words are good or bad in promoting inclusivity, and whether “words” are important in this task.

In order to “promote inclusivity,” the publisher of the late Ronald Dahl recently produced two different versions of James and the Giant Peach—changing “Cloud-men” to “Cloud-people” (among other changes) in their Puffin version—and keeping “Cloud-men” in the classic Penguin version.

The spy-thrillers of Ian Fleming, and the mysteries of Agatha Christie, underwent a similar process.

Combating racial, and other types of discrimination, through “sanitizing,” or even cancelling works, isn’t new.

I remember debates in the 1970’s about whether 1939’s Gone with the Wind should be banned.

Disney’s 1946 blend of live-action and animation, Song of the South,* isn’t considered “appropriate in today’s world,” and hasn’t been seen on home video legally since 1986.

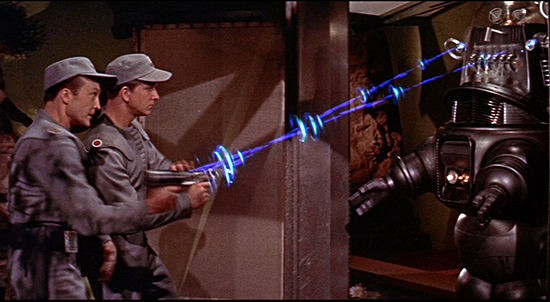

Some Warner Brother cartoons (like “Herr and Hare” and ”Daffy-the-Commando,” produced as propaganda between 1941-1945) were restored and rereleased—along with a lengthy disclaimer—in 2008.

(Volume 6 of the Looney Tunes Golden Collection.)

However, some of the more racially-insensitive 1930’s and World War II cartoons (for example, ”Tokio Jokio”) will likely never see the light of day—at least, legally.

Meantime—in order to ”protect societal values”—U.S. school boards are removing classic children’s books (like Charlotte’s Web and A Wrinkle in Time) from their school library shelves.

(I mention Charlotte’s Web and A Wrinkle in Time because these were two of my favorites.)

I looked up why one parent group proposed removing 1952’s Charlotte’s Web, and the parents disliked characters dying, and thought that “talking animals” were “disrespectful to God.”

A Wrinkle in Time (1962) was criticized for “promoting witchcraft.”

I have fond memories of both books.

I remember my 4th grade school teacher, Mrs. Simmons, reading Charlotte’s Web aloud to us.

(I adored Mrs. Simmons.)

I checked out A Wrinkle in Time from our public library during the 1960’s, and ended up reading every other book I could find by Madeleine L’Engle.

Is it “woke” to buy a children’s book like 2005’s And Tango Makes Three—a story about two male penguins who help raise a chick together—in order to foster a more inclusive society?

Is it “anti-woke” to ask that And Tango Makes Three be removed from your public library, so that children won’t be influenced to accept homosexuality as normal?

In the end, I agree with those who support parents not allowing their children to read certain books, but not the right to deny librarian-approved books to others.

*Song of the South was based on the once well-known Uncle Remus stories. The folklorist/author was Joel Chandler Harris (1848-1908), a white journalist. Harris wrote down the Br’er Rabbit and Br’er Fox tales after listening to African folk tales told by former slaves—primarily, George Terrell. According to the Atlanta Journal Constitution (11/2/2006), Disney Studios purchased the film rights for Song of the South from the Harris family in 1939, for $10,000—the equivalent of about $218,246.76 today.